For Tesla owners in France, the convenience of Autopilot and Full Self-Driving (FSD) is undeniable, transforming daily commutes into moments of respite. Yet, a critical legal question lingers in the background of every assisted drive: in the event of an accident, where does responsibility truly lie? The French legal framework provides a clear, unambiguous answer that every driver must understand before engaging these systems.

The Legal Bedrock: The Driver's Unchanging Responsibility

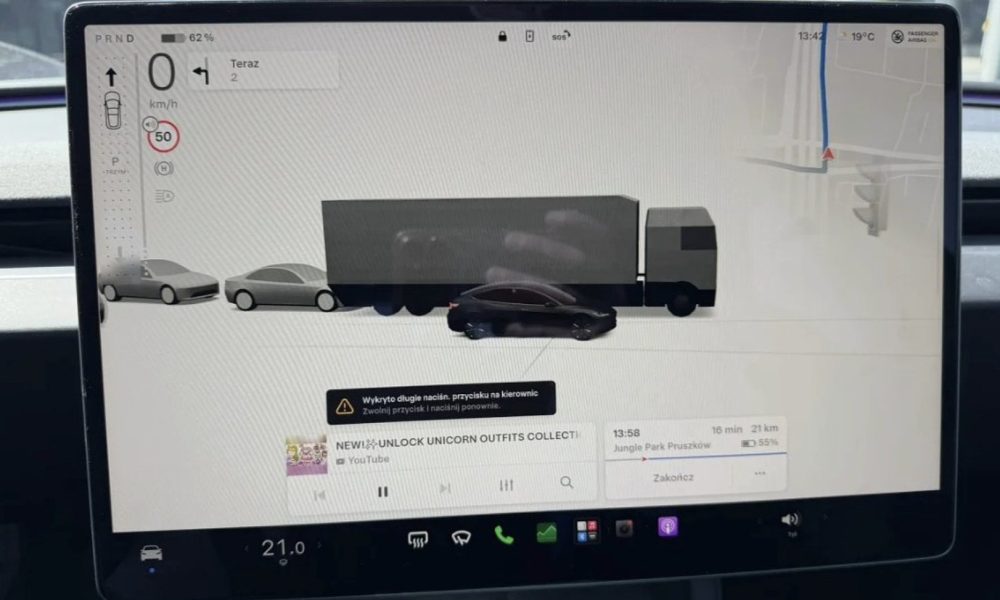

French law cuts through the technological complexity with a steadfast principle. According to the Highway Code (Code de la Route), the person behind the wheel is always considered the driver and remains legally responsible for the vehicle's trajectory and control, regardless of any advanced driver-assistance system (ADAS) in operation. This means that even with FSD Beta active, the law views you, the human in the driver's seat, as the ultimate operator. The systems are officially classified as Level 2 automation under the SAE scale, a crucial designation that reinforces the driver's continuous duty of vigilance and supervision.

Insurance and Liability in the Aftermath of a Crash

This legal stance directly dictates insurance outcomes. In a collision, your car insurance—not Tesla's—is the primary port of call. The insurer will investigate whether you were adhering to your obligations: hands on the wheel, eyes on the road, and ready to immediately retake full control. If you were negligent in supervising the system, your liability could be engaged, potentially affecting your premium and no-claims bonus. A product liability claim against Tesla would only become a separate, arduous consideration if a specific and provable software or hardware defect was the direct and sole cause of the incident, a notoriously high bar to clear.

The analysis becomes more nuanced when considering potential criminal fault. Prosecutors would examine if the driver's behavior constituted offenses like endangerment of others or involuntary injury. Over-reliance on Autopilot, such as engaging it in conditions it's not designed for or blatantly ignoring its warnings, could be used as evidence of negligence. The system's own warnings, which constantly remind the driver to maintain control, become a key piece of evidence in such proceedings, underscoring the shared but ultimately driver-weighted responsibility model.

Implications for Tesla Owners and Investors

For Tesla owners in France, the message is unequivocal: treat Autopilot and FSD as sophisticated co-pilots, not autonomous chauffeurs. Your legal exposure is significant, and insurance is not a guaranteed shield against personal liability. This reality necessitates a disciplined approach to system use, ensuring full compliance with all prompts and maintaining an active driving posture. For investors, this legal landscape highlights the immense regulatory journey ahead for true vehicle autonomy. It underscores that Tesla's path to monetizing FSD is inextricably linked to a global evolution in liability frameworks, a process that will move far slower than software iteration. The company's ability to navigate and potentially reshape these regulations will be as critical as its technological advancements.