In a significant regulatory vote of confidence, the U.S. National Highway Traffic Safety Administration (NHTSA) has closed its long-running probe into Tesla's Actually Smart Summon feature without demanding a recall. The decision concludes a nearly two-year investigation that scrutinized the driver-assist parking function, ultimately determining that reported incidents were infrequent and resulted in minimal risk. This closure not only removes a regulatory overhang for Tesla but also underscores the complex challenges and evolving standards for assessing advanced vehicle automation in public spaces.

A Probe Focused on Low-Speed Automation

The NHTSA's Office of Defects Investigation (ODI) initiated the preliminary evaluation in October 2022, prompted by consumer reports of the Smart Summon feature causing minor collisions in parking lots. The feature, part of Tesla's Full Self-Driving and Enhanced Autopilot suites, allows a vehicle to navigate autonomously across a parking lot to its owner at low speeds. Investigators analyzed over 800 consumer complaints and several crash reports linked to the technology. Their final analysis revealed that the vast majority of incidents involved low-speed contact with stationary objects like fences or posts, with no reported serious injuries. The agency noted that Tesla had issued software updates throughout the investigation period that improved the system's performance, a factor in its decision to close the case.

Context: The Regulatory Tightrope for Driver-Assist Tech

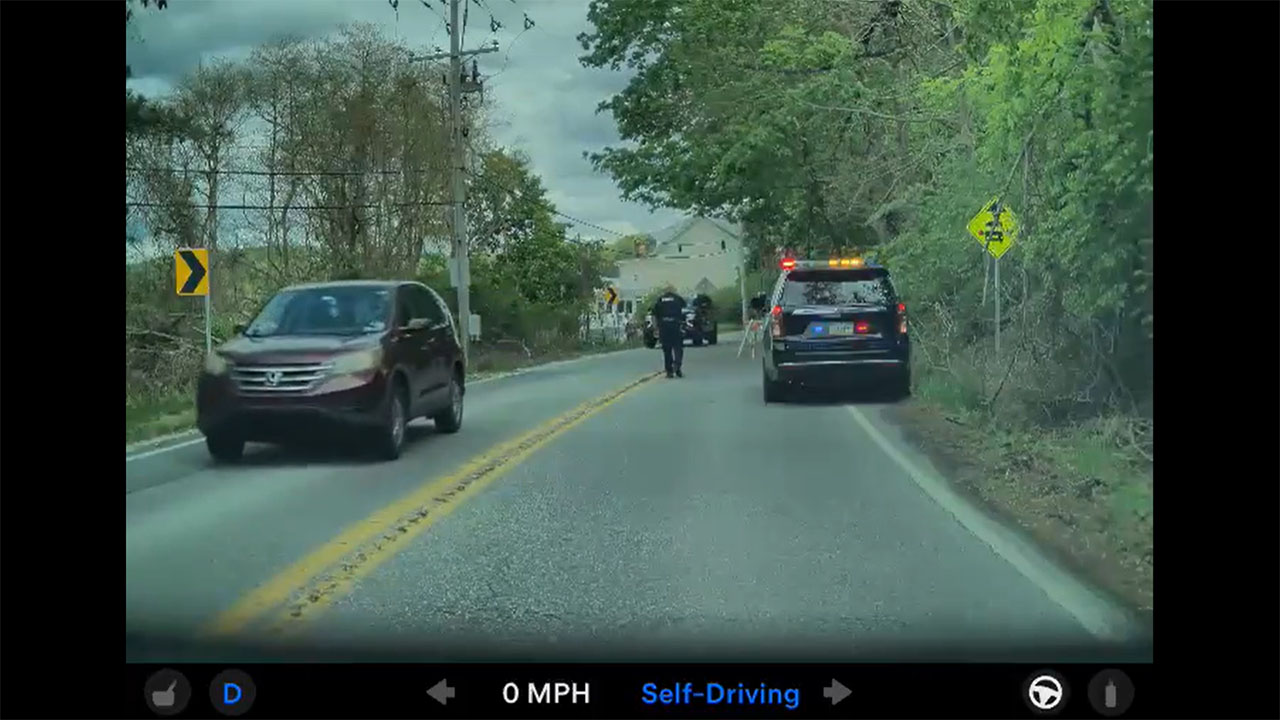

This investigation highlights the delicate balance regulators must strike between fostering innovation and ensuring public safety. Actually Smart Summon operates in unpredictable, unstructured environments—active parking lots with pedestrians, shopping carts, and moving vehicles—posing a far greater challenge than highway driving. The NHTSA's closure signals a recognition that minor, low-risk incidents in such complex scenarios may not constitute a safety defect, especially when the driver remains responsible and the system is continually updated. However, the probe itself served as a public reminder that "automated" does not mean "infallible," placing the onus squarely on the human operator to monitor the vehicle at all times.

For Tesla, the outcome is a clear win. The company has consistently maintained that its driver-assistance systems, when used correctly, are safer than human driving alone. The NHTSA's finding of only rare and minor incidents supports that narrative for this specific feature. It also validates Tesla's iterative, over-the-air update approach to refining its systems post-deployment. In the broader EV and automotive tech landscape, this decision sets a precedent for how regulators may evaluate low-speed, geofenced automation features that occasionally exhibit imperfect behavior but don't rise to the level of a systemic hazard.

Implications for Owners and the Road Ahead

For current Tesla owners with access to Actually Smart Summon, the investigation's closure provides reassurance but not a license for complacency. The technology remains a convenience feature requiring vigilant oversight. Investors, meanwhile, can view this as a reduction in regulatory risk, removing a potential recall expense and negative headline catalyst. More importantly, it allows Tesla and the NHTSA to focus resources on more critical ongoing investigations, particularly those concerning Tesla's Autopilot systems on public roads. As the industry charges toward greater autonomy, this case establishes that the regulatory bar for "safety defect" in cutting-edge, low-speed features is notably high, a standard that will undoubtedly influence the development and deployment of future automated driving functions.