Tesla has officially initiated the public rollout of its long-awaited Full Self-Driving (FSD) Supervised v14.3, marking a pivotal moment in the evolution of its autonomous driving software. The update, bearing firmware version 2026.2.9.6, begins its journey to customer vehicles after CEO Elon Musk previously heralded it as the "big piece of the puzzle," signaling foundational architectural shifts under the hood. This release promises not just incremental improvements but a leap forward in system responsiveness and AI efficiency, aiming to deliver a more natural and confident driving experience.

The Core of v14.3: A Rewritten AI Compiler and Sharper Reactions

At the heart of FSD v14.3 is a fundamental re-engineering of the AI compiler stack, now rebuilt using MLIR (Multi-Level Intermediate Representation). This technical overhaul is far more than a simple code optimization. MLIR allows for more efficient compilation of the massive neural networks that power FSD, potentially leading to faster processing of sensor data and more effective use of the vehicle's Hardware 3 (HW3) and Hardware 4 (HW4) computer systems. The most immediate benefit for drivers is a significantly improved reaction time, with the vehicle's decision-making and control responses feeling more instantaneous and human-like in complex traffic scenarios.

Decoding the Release Notes and Rollout Strategy

Tesla's official release notes for version 2026.2.9.6 succinctly highlight the enhanced reaction capabilities and the compiler transition. As is standard for major FSD updates, the rollout is progressive, starting with a small subset of non-employee owners before expanding to the wider fleet over the coming days and weeks. This cautious, data-driven approach allows Tesla to monitor real-world performance and address any unforeseen edge cases before a broad deployment. Owners can check for the update via their vehicle's touchscreen under 'Software' and should ensure their vehicle is connected to a strong Wi-Fi signal.

The shift to an MLIR-based compiler represents a strategic investment in the scalability and future-proofing of the FSD stack. By creating a more optimized pathway between the AI models and the vehicle's hardware, Tesla engineers can iterate on neural network designs more rapidly and extract greater performance from existing EV platforms. This backend efficiency is crucial for handling the exponentially growing complexity of real-world driving data and paves the way for smoother integration of future capabilities and hardware iterations.

Implications for the Tesla Ecosystem

For Tesla owners and investors, the successful wide-scale deployment of v14.3 carries substantial weight. A demonstrably smoother, safer, and more capable FSD experience strengthens the value proposition of the FSD package and Tesla's entire electric vehicle lineup, potentially boosting customer satisfaction and software attach rates. From an investment perspective, tangible progress in core AI and autonomy is a key driver for the company's long-term valuation thesis, reinforcing its technological lead in a fiercely competitive market.

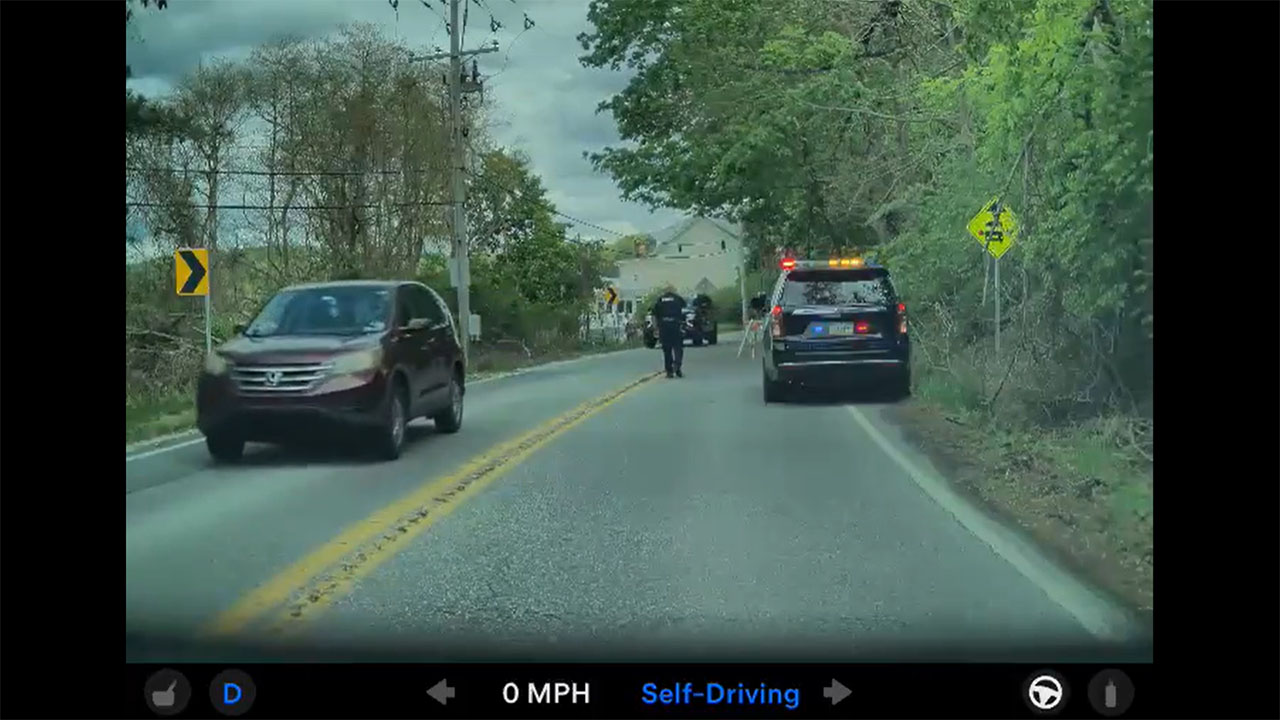

The true test, as always, will be on the road. If v14.3 delivers on its promise of markedly improved reaction times and overall driving polish, it could represent a turning point in public perception of Tesla's autonomy efforts. As the fleet data pours in, all eyes will be on how this "big piece of the puzzle" fits into Tesla's relentless march toward a fully autonomous future.