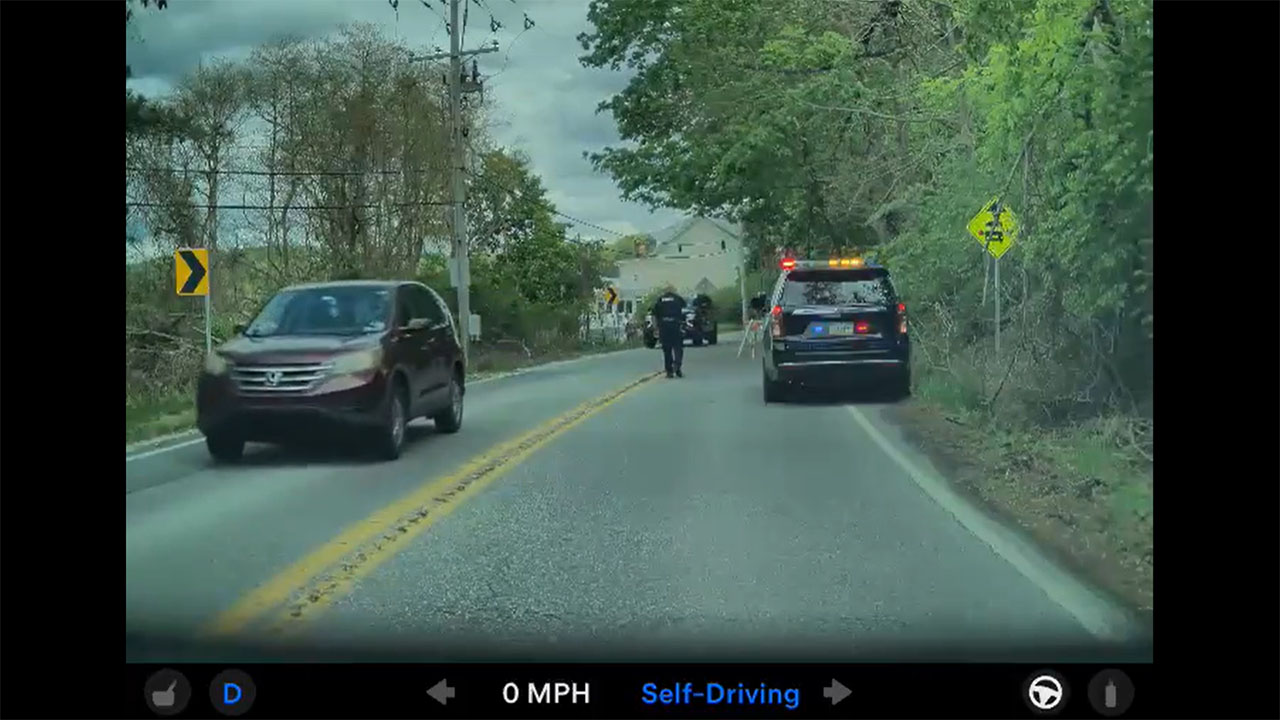

A viral video depicting a Tesla's "Full Self-Driving" system attempting to steer directly into a body of water has reignited the fierce debate over the safety and nomenclature of advanced driver-assistance technology. The clip, which has amassed over 1 million views, shows a Model Y approaching a T-intersection where the road curves left, but the car's FSD Beta software plots a course straight ahead—directly into a lake. The driver's quick intervention prevented a catastrophic outcome, but the incident stands as one of the most stark and visually alarming examples of the system's potential failure in edge-case scenarios.

A Pattern of Perilous Edge Cases

This is not an isolated incident but part of a concerning pattern documented by users. While Tesla's Full Self-Driving Beta system excels in many routine driving tasks, its performance at complex or unusual intersections—often called "edge cases"—has repeatedly drawn scrutiny. The lake incident exemplifies a critical failure in environmental interpretation: the software seemingly prioritized following the painted lane markings that continued forward over recognizing the obvious physical barrier of the water. This highlights a fundamental challenge in AI-driven navigation: the system must not only see the world but also comprehend it with a level of common-sense reasoning that remains incredibly difficult to engineer.

The Gaping Chasm Between Marketing and Reality

The viral video underscores the persistent and dangerous gap between the system's name and its actual capabilities. Tesla markets the feature as Full Self-Driving, a term that implies autonomous operation, yet legally and functionally it remains a Level 2 driver-assistance system requiring constant human supervision. This dissonance can lead to complacency, a phenomenon known as automation bias, where drivers over-trust the technology. Tesla's own warnings and the system's frequent demands for steering wheel nag confirm the need for vigilant oversight, yet the branding continues to fuel unrealistic expectations about the software's readiness for unsupervised operation.

Implications for Owners and the Road Ahead

For current and prospective Tesla owners, this incident is a potent reminder that FSD Beta is a co-pilot, not a chauffeur. Active hands on the wheel and unwavering attention to the road are non-negotiable requirements, especially in areas with complex geography or non-standard road layouts. For investors, the video represents a tangible reputational and regulatory risk. Each high-profile failure provides ammunition for critics and safety advocates calling for stricter oversight of automated driving systems. It also pressures Tesla's engineering team to accelerate development of the next-generation "end-to-end neural network" that aims to replace more of the car's hard-coded logic with AI, a move promised to better handle these exact types of unpredictable scenarios. The path to true autonomy is proving to be longer and far more littered with obstacles than many anticipated.