A Tesla operating on the company's Full Self-Driving (FSD) beta software allegedly drove through a lowered railroad crossing arm, forcing its owner to take emergency action to avoid a collision with an oncoming train. The incident, reported by a Texas driver, represents one of the most dramatic and dangerous close calls involving the advanced driver-assistance system publicly described to date, reigniting critical debates about the technology's capabilities and the branding of systems that require constant human supervision.

A "Let Down" at a Critical Moment

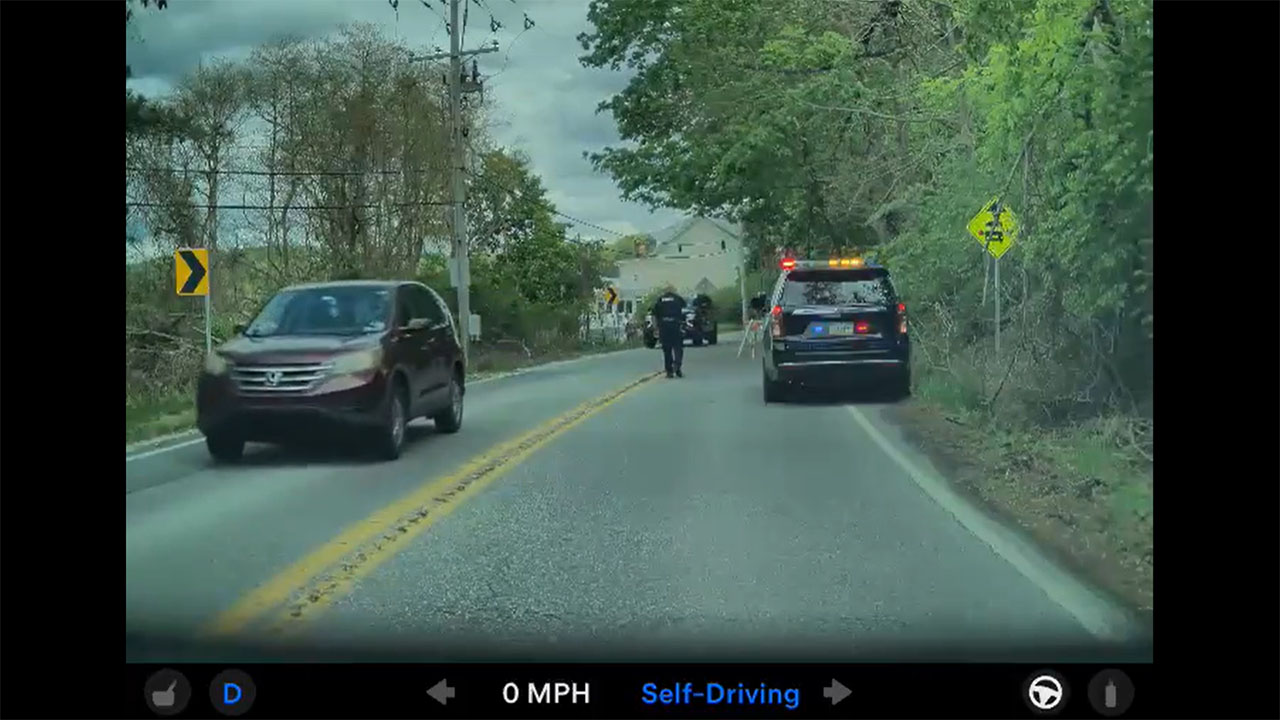

According to the driver, Joshua Brown, his Tesla Model 3 was engaged in FSD mode when it approached an active railroad crossing. Brown, who claims to have logged over 40,000 miles using Tesla's driver-assist systems, stated the vehicle failed to recognize the hazard, proceeding to break through the safety gate as warning lights flashed. With a train rapidly approaching, Brown reported he was forced to "punch the accelerator" to clear the tracks, narrowly avoiding a catastrophic impact. He described the event as the first time FSD had profoundly "let me down," highlighting a terrifying gap between the system's perceived intelligence and its real-world performance at a life-or-de-decision point.

The Persistent Chasm Between Branding and Capability

This near-miss underscores the ongoing tension between Tesla's marketing of its FSD and Autopilot suites and their actual operational design domain. While the company emphasizes that these are Level 2 systems requiring an attentive driver ready to take over at any moment, the "Full Self-Driving" nomenclature can lead to dangerous complacency. Regulatory bodies, including the NHTSA, have repeatedly scrutinized this naming convention. The railroad crossing incident exemplifies a known challenge for current vision-based EV autonomy: reliably interpreting unpredictable real-world infrastructure, especially in scenarios with severe consequences for failure, remains a formidable hurdle.

For Tesla owners and investors, this report is a stark reminder. It reinforces the non-negotiable imperative that the human behind the wheel is the ultimate responsible agent, regardless of the software's sophistication. Every such incident adds to the regulatory and reputational headwinds facing the company's autonomy ambitions. For the broader electric vehicle and autonomous driving industry, it serves as a case study in the critical importance of clear communication, rigorous validation for edge-case scenarios, and the immense technical challenges that separate driver assistance from true vehicle autonomy. The path forward demands not just technological iteration, but also managed public expectation and unwavering driver vigilance.