Tesla's relentless march toward autonomous driving has taken a critical, ethically-charged leap forward. The latest iteration of its Full Self-Driving software, FSD v14.3 (2026.2.9.6), is rolling out with a core mission: to dramatically improve how the AI perceives and reacts to the most unpredictable and vulnerable entities on the road. This isn't just about smoother highway merges; it's about a fundamental enhancement in reaction speed that could redefine safety for pedestrians, cyclists, and wildlife.

A 20% Leap in Reaction Time: The Core of v14.3

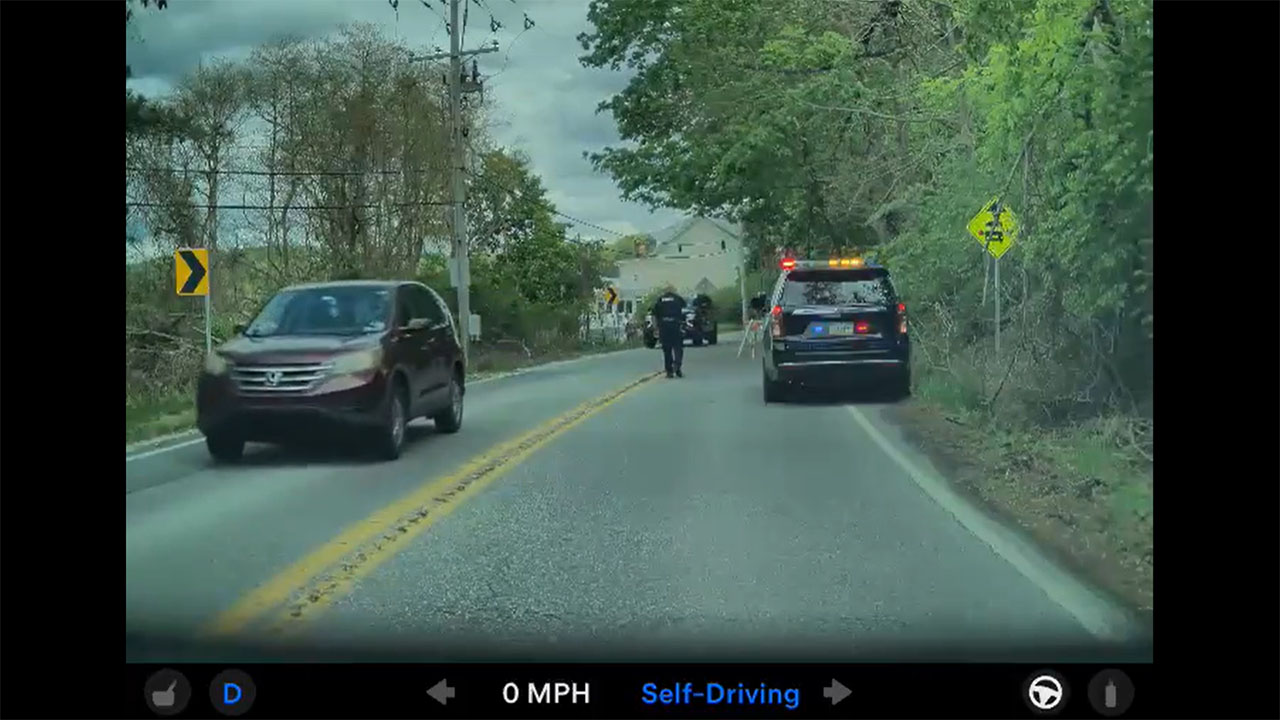

The headline achievement of this update is buried in the technical release notes but carries profound real-world implications. Tesla's AI team has engineered a system-wide 20% faster reaction time. In the high-stakes domain of driving, where milliseconds can mean the difference between a near-miss and a collision, this improvement is monumental. This speed boost is the result of underlying optimizations in Tesla's vision-based neural networks, allowing the vehicle's "brain" to process camera input, identify threats, and initiate corrective actions more swiftly than ever before. For an EV that can already accelerate instantaneously, pairing that physical capability with a faster-thinking AI creates a powerful safety synergy.

Beyond Humans: Protecting Smaller Animals and Vulnerable Road Users

While protecting human life is paramount, Tesla has explicitly extended its AI's vigilance to smaller creatures. FSD v14.3 showcases enhanced detection capabilities for animals like dogs, cats, and wildlife that may dart into the roadway—a scenario traditional radar and less sophisticated systems often miss. This focus on "vulnerable road users" encompasses not just fauna but also cyclists, pedestrians, and scooter riders, whose movements are less predictable than other vehicles. The update refines the system's understanding of intent and trajectory for these entities, enabling more nuanced and preemptive driving responses, such as earlier, smoother braking or subtle steering adjustments.

The significance of this development cannot be overstated. It moves the system's capabilities beyond rigid object detection toward a more fluid, real-time risk assessment of dynamic living beings. This represents a key step in the journey from driver-assistance to true autonomous operation, where the vehicle must navigate a complex biological environment, not just a mechanical one. It directly addresses a major public concern about self-driving technology: its ability to handle edge cases and unexpected obstacles.

For current Tesla owners with the FSD capability, v14.3 is a substantial, over-the-air upgrade that materially enhances their vehicle's protective intelligence. Every drive becomes an opportunity for the system to gather more data on these critical scenarios, further refining the neural network in a continuous feedback loop. For investors, this update underscores Tesla's vertical integration advantage; its ability to rapidly deploy deep, systemic software improvements across its entire fleet strengthens its lead in the data and AI arms race that will define the future of transportation.